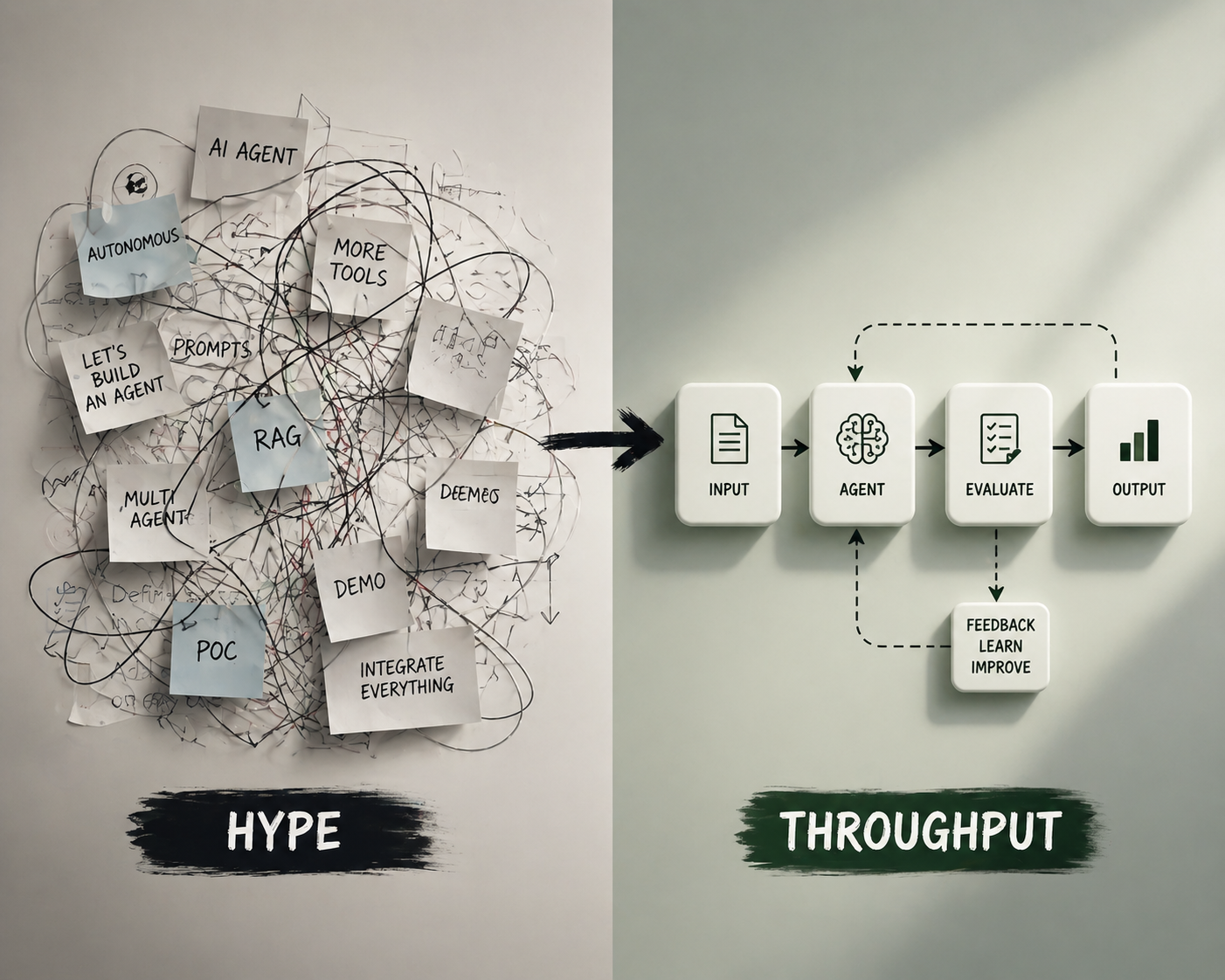

From Hype to Throughput: Landing Your First Agentic AI Use Case

Most teams are building AI agents. Few are getting real value. The difference is not better prompts, it’s better systems. Here’s how to land your first agentic AI use case using a Vibe-to-Value loop, with evals, guardrails, and measurable outcomes.

Everyone is building AI agents.

Very few teams are getting consistent outcomes from them.

That gap matters.

Because the problem is no longer access to models, tools, or frameworks.

The problem is turning experiments into something that actually produces value, repeatedly.

Here’s the uncomfortable truth:

Agents don’t create value. Systems around them do.

If you don’t design for that, you’ll stay stuck in demo mode.

The Real Problem Isn’t “Use Cases”

Most teams start in the same place: “We need AI use cases.”

So they brainstorm. They generate a list. They prioritize.

And then… nothing really lands.

Because they’re solving the wrong problem.

You don’t need more use cases.

You need one that actually ships and produces measurable outcomes.

Common failure modes:

- Starting too big

- Optimizing for demos instead of outcomes

- No clear definition of “done”

- No evaluation strategy

- Treating agents like chatbots instead of systems

The goal is not to explore.

The goal is to land.

What a Good First Use Case Looks Like

Your first agentic use case should feel slightly underwhelming.

That’s a feature.

Look for something that is:

Painful today

A real bottleneck. Not interesting. Not novel. Painful.

Multi-step

Requires reasoning, transformation, or stitching.

Measurable

You can define what “good” looks like.

Low risk

If it fails, nothing critical breaks.

Repeatable

It happens often enough to matter.

Bad first use cases:

- “AI assistant for everything”

- “Fully autonomous system”

- “End-to-end workflow replacement”

Good first use cases:

- Generate a structured first draft from known inputs

- Normalize and enrich incoming data

- Produce insights from a defined dataset

If it sounds boring, you’re probably on the right track.

From Vibe to Value

Most teams operate in what I call the “vibe layer.”

This feels like something AI should help with.

That’s fine. That’s where it starts.

But value doesn’t come from the vibe.

It comes from closing the loop.

1. Intent (The Vibe)

“This process is slow, manual, and inconsistent.”

2. Build Fast

One workflow. No platform thinking. Just make it work.

3. Evaluate Immediately

Define:

- Correctness

- Completeness

- Consistency

Score real outputs against real examples.

4. Human Judgment

Focus on failure modes, not blanket review.

5. Promote Carefully

Limited real usage. Not a sandbox.

6. Learn and Iterate

Fix what breaks. Tighten structure.

7. Expand or Kill

Scale what works. Drop what doesn’t.

Most teams stop at step 2.

That’s why nothing ships.

What This Actually Looks Like (Real Example)

Take a common workflow: sales insight generation

Before

- Input: raw spreadsheet + call notes

- Analyst manually:

- Cleans data

- Identifies patterns

- Writes summary

- Time: 2–4 hours per report

- Output quality: inconsistent

First agentic version

- Input: same spreadsheet + notes

- Agent flow:

- Normalize data structure

- Extract key signals

- Generate structured summary

- Flag anomalies or gaps

Evaluation setup

- 15 known “good” reports

- Scoring:

- 1–5 for accuracy

- 1–5 for completeness

- Simple checks:

- Required fields present

- No hallucinated metrics

Result after iteration

- Time: ~30 minutes

- Output: “good enough” for first draft

- Human role: refine, not create

This is not magic.

It is a constrained system with:

- Clear inputs

- Defined outputs

- Measurable quality

That is why it works.

The Playbook: Landing Your First Use Case

Step 1: Find a bottleneck

Something slow, manual, and repeated.

Step 2: Define success in one sentence

Example: “Reduce this process from 4 hours to 30 minutes with acceptable quality.”

If you can’t define success, don’t build.

Step 3: Build the smallest viable flow

One workflow. Not reusable. Not general.

Step 4: Add evaluation on day one

Make this concrete:

- Start with 10–20 real examples

- Define pass/fail criteria

- Add simple scoring (1–5)

- Use LLM-as-judge where needed

- Add deterministic checks where possible

Track:

- Where it fails

- How it fails

- How often it fails

If you’re not measuring this, you are guessing.

Step 5: Run a tight loop (2–6 weeks)

Daily or near-daily iteration.

You are not observing behavior.

You are shaping it.

Step 6: Ship with guardrails

In practice, this means:

- Confidence thresholds for outputs

- Required fields and structure validation

- Human review for low-confidence cases

- Logging and traceability

- A clear rollback path

Not theoretical safety.

Operational control.

This is the pattern I use to help teams move from pilots to production in their first use case.

Hard Truth: Most First Use Cases Fail

Not because the model is bad.

Because the team cannot:

- Define success

- Measure output quality

- Identify failure modes

- Control behavior over time

So the system feels unpredictable.

Trust drops.

Adoption dies.

This is where most efforts stall.

The Real Differentiator

Most teams focus on:

- Better prompts

- More tools

- More autonomy

Winning teams focus on:

- Evaluation

- Workflow design

- Observability

- Control points

The hard part is not building the agent.

It’s building the system that tells you when it’s wrong.

From Experimentation to Throughput

The goal of your first agentic use case is not innovation.

It’s throughput.

It’s proving that you can take a workflow, apply AI, and produce consistent, measurable outcomes.

Once you can do that once, you get better at doing it again.

Not easier.

Better.

That’s when AI stops being a demo and starts becoming a capability.